AI Impossible Tic Tac Toe

This was a project inspired from watching Harvard's CS50 AI course.

As the title suggests, it's impossible for the player to win against this AI as it will always choose the most optimal move. It's made with python and pygame so click here to view the repositroy and download the relevent files for mac or windows.

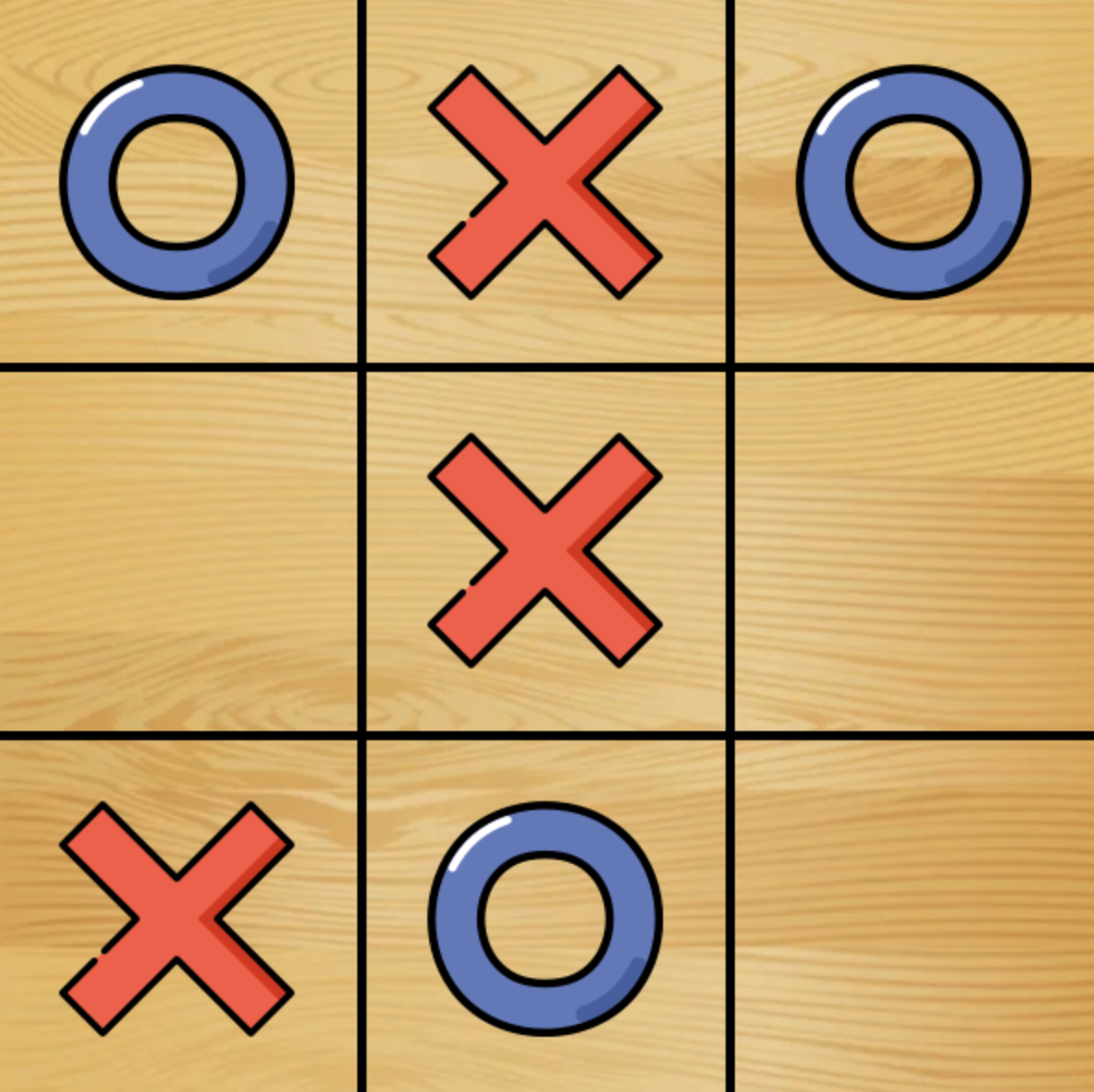

Very exciting gameplay (human is X):

Main Logic

Here's how the AI works. In general, the AI is basically performing a search algorithm to find the most optimal move in a certain state of the board. But before I explain more, there are some essentials to keep in mind.

Key terms:

- State describes a particular state of what the AI is trying to understand at a certain moment in time. This would be the board in our case.

- Actions describes a set of possible actions that can be done to manipulate a state. This would be a move in our case.

- Transition model describes the effect of performing an action on a state. In our case, this would be a new state.

- Goal test checks whether an end state is reached. We will check if board is full or if winner is detected.

- Path cost effectively calculates how good the path to a certain end state is. We will manpulate the number of moves it takes to get to an end state to get our path cost.

Key functions:

- PLAYER(s) reads state s and returns the next player to move.

- ACTIONS(s) returns all possible actions (moves) available for a given state s.

- RESULT(s, a) reads a state and action and returns the new state as a result of performing action a on the old state s.

- TERMINATE(s) is the goal test.

- UTILITY(s) returns a numerical value related to terminal state s. We will talk more about this later.

Notes:

- X always moves first.

- Player can choose to play as either sprites.

Now, the essence of this project is simple. How exactly does the AI choose what action to take? For the AI to perform optimally, we can give it an objective by utilizing the adversarial nature of the game to assign number each to describe the win value of X and O and ties. From this, we can describe the favoribility of each state and then use it to find the action that will result in the optimal state. Since the player can play as either sprites, we set X to be the player that tries to maximize the score and O to minimize. To illustrate, consider -10 for O win, 10 for X win, and 0 for tie, then 8 would represent that the current state is more favorable for X. Hence, UTILITY(s) will either return -10, 10, or 0.

Now that we have a way for the AI to understand the it's goal, we need to write a function that returns the best state value given a state s. Since X is trying to maximize, O will then try to minimize the states resulting from X's actions. Therefore, X's optimal state value will be the maximum of O minimizing each state that X's action can result in. But what is O's minimum state? Well, since X will try to maximize from each of O's resultant states, O's state value will be the minimum state value when X maximizes the state values for each possible action that O can take. It's evident that recursion is at hand here, so we have the following pseudo code:

function MAX-VALUE(state):

if TERMINAL(state):

return UTILITY(state)

v = -∞

for action in ACTIONS(state):

v = MAX(v, MIN-VALUE(RESULT(state, action)))

return v

function MIN-VALUE(state):

if TERMINAL(state):

return UTILITY(state)

v = ∞

for action in ACTIONS(state):

v = MIN(v, MAX-VALUE(RESULT(state, action)))

return v

# Source: Harvard CS50’s Artificial Intelligence with Python – Full University Course

Optimizations

Note also that some optimizations can be made here. First, our algorithm will return the most optimal move, but it may be a slower win. Therefore, we can add another parameter called depth that will track the depth needed to get to a certain end state and then we can subtract depth from 10 if we're maximizing and add depth to -10 if we're minimizing. This GFG article explains it pretty well.

def minimax(s, depth, is_max):

if terminal(s):

win_val = utility(s)

if win_val == 10:

return 10 - depth

elif win_val == -10:

return -10 + depth

else:

return 0

if is_max:

v = float('-inf')

for action in actions(s):

v = max(v, minimax(result(s, action), depth + 1, False))

return v

else:

v = float('inf')

for action in actions(s):

v = min(v, minimax(result(s, action), depth + 1, True))

return v

Next we note that sometimes, certain actions don't need to be explored. Suppose that it's X's turn and it explores the first possible action with all of its subtrees which ultimately has state value 4. Then on the next action, without fully exploring all this action's subtrees, we find that this action has a subtree state with value 3. Since the maximum state value so far is 4, and O is trying to minimize, this means that no matter what subtrees of the current action we continue to explore, O's state value will be <= 3. This means that we will have to create two variables to keep track of the lowest and highest possible values so far.

In the code, we just have to add two new parameters called alpha and beta initially set to -inf and inf respectively which keeps track of maximum and minimum values so far. Then, if we're maximizing and the value we got from a certain action is lower than beta, this tells us that we can skip all other subtrees of this action so we break out of the loop. Otherwise, we update alpha as the maximum of itself and current state value. Minimizing is just the opposite. This approach is actually called alpha-beta pruning.

def minimax(s, depth, alpha, beta, is_max):

if terminal(s):

win_val = utility(s)

if win_val == 10:

return 10 - depth

elif win_val == -10:

return -10 + depth

else:

return 0

if is_max:

v = float('-inf')

for action in actions(s):

v = max(v, minimax(result(s, action), depth + 1, alpha, beta, False))

if v > beta:

break

alpha = max(v, alpha)

return v

else:

v = float('inf')

for action in actions(s):

v = min(v, minimax(result(s, action), depth + 1, alpha, beta, True))

if v < alpha:

break

beta = min(v, beta)

return v

Now we can also implement a function to get the actual move referred to by the optimal value.

def find_best_move(s):

who_to_move = player(s)

best_val = float('-inf') if who_to_move == "X" else float('inf')

best_move = None

for action in actions(s):

val = minimax(result(s, action), 0, float('-inf'), float('inf'), who_to_move == "O")

if (who_to_move == "X" and val > best_val) or (who_to_move == "O" and val < best_val):

best_val = val

best_move = action

return best_move

*Note that the argument to is_max is as such due to player alternation.

Some nice features

- Menu/end screen

- Hovering effect for buttons